Motivational quote.

List of Publications

Published and submitted content

The publications in the following list are partly included in the thesis. The inclusion of material from these sources is specified in each chapter where an inclusion occurs, using the corresponding uppercase letter and reference. The material included from these sources is not singled out with typographic means and references. The list is ordered chronologically.

- José Manuel Vega-Cebrián, Elena Márquez Segura, Laia Turmo Vidal, Omar Valdiviezo-Hernández, Annika Waern, Robby Van Delden, Joris Weijdom, Lars Elbæk, Rasmus Vestergaard Andersen, Søren Stigkær Lekbo, and Ana Tajadura-Jiménez. 2023. Design Resources in Movement-based Design Methods: a Practice-based Characterization. In Proceedings of the 2023 ACM Designing Interactive Systems Conference (DIS ’23), July 10, 2023. Association for Computing Machinery, New York, NY, USA, 871–888. doi.org/10.1145/3563657.3596036

- José Manuel Vega-Cebrián, Elena Márquez Segura, and Ana Tajadura-Jiménez. 2024. Towards a Minimalist Embodied Sketching Toolkit for Wearable Design for Motor Learning. In Proceedings of the Eighteenth International Conference on Tangible, Embedded, and Embodied Interaction (TEI ’24). Association for Computing Machinery, New York, NY, USA, Article 73, 1–7. doi.org/10.1145/3623509.3635253

- José Manuel Vega-Cebrián, Laia Turmo Vidal, Ana Tajadura-Jiménez, Tomás Bonino Covas, and Elena Márquez Segura. 2024. Movits: a Minimalist Toolkit for Embodied Sketching. In Proceedings of the 2024 ACM Designing Interactive Systems Conference (DIS ’24), July 01, 2024. Association for Computing Machinery, New York, NY, USA, 3302–3317. doi.org/10.1145/3643834.3660706

- José Manuel Vega-Cebrián, Elena Márquez Segura, María Fernanda Alarcón, Tomás Bonino Covas, Lara Cristóbal, Andrés A. Maldonado, and Ana Tajadura-Jimenez. 2025. Co-designing Minimalist Wearables to Support Physical Rehabilitation after Peripheral Nerve Transfer Surgery. doi.org/10.5281/zenodo.17903256

Other research merits

The peer-reviewed publications in the following list are not included in the thesis but constitute work that was done during the same period. The list is ordered chronologically.

- Robby van Delden, Dennis Reidsma, Dees Postma, Joris Weijdom, Elena Márquez Segura, Laia Turmo Vidal, José Manuel Vega-Cebrián, Ana Tajadura-Jiménez, Annika Waern, Solip Park, Perttu Hämäläinen, José Maria Font, Mats Johnsson, Lærke Schjødt Rasmussen, and Lars Elbæk. 2023. Technology, Movement, and Play Is Hampering and Boosting Interactive Play. In Companion Proceedings of the Annual Symposium on Computer-Human Interaction in Play (CHI PLAY Companion ’23), October 06, 2023. Association for Computing Machinery, New York, NY, USA, 231–234. doi.org/10.1145/3573382.3616050

- Laia Turmo Vidal, José Manuel Vega-Cebrián, Amar D’Adamo, Marte Roel Lesur, Mohammad Mahdi Dehshibi, Joaquín Díaz Durán, and Ana Tajadura-Jiménez. 2023. On Futuring Body Perception Transformation Technologies: Roles, Goals and Values. In Proceedings of the 26th International Academic Mindtrek Conference (Mindtrek ’23), November 02, 2023. Association for Computing Machinery, New York, NY, USA, 169–181. doi.org/10.1145/3616961.3616991

- Elena Márquez Segura, José Manuel Vega-Cebrián, Andrés A. Maldonado Morillo, Lara Cristóbal Velasco, and Andrea Bellucci. 2024. Embodied Hybrid Bodystorming to Design an XR Suture Training Experience. In Proceedings of the Eighteenth International Conference on Tangible, Embedded, and Embodied Interaction (TEI ’24), February 11, 2024. Association for Computing Machinery, New York, NY, USA, 1–12. doi.org/10.1145/3623509.3633362

- Laia Turmo Vidal, Ana Tajadura-Jiménez, José Manuel Vega-Cebrián, Judith Ley-Flores, Joaquin R. Díaz-Durán, and Elena Márquez Segura. 2024. Body Transformation: An Experiential Quality of Sensory Feedback Wearables for Altering Body Perception. In Proceedings of the Eighteenth International Conference on Tangible, Embedded, and Embodied Interaction (TEI ’24), February 11, 2024. Association for Computing Machinery, New York, NY, USA, 1–19. doi.org/10.1145/3623509.3633373

- Laia Turmo Vidal, José Manuel Vega-Cebrián, María Concepción Valdez Gastelum, Elena Márquez Segura, Judith Ley-Flores, Joaquin R. Diaz Duran, and Ana Tajadura-Jiménez. 2024. Body Sensations as Design Material: An Approach to Design Sensory Technology for Altering Body Perception. In Proceedings of the 2024 ACM Designing Interactive Systems Conference (DIS ’24), July 01, 2024. Association for Computing Machinery, New York, NY, USA, 2545–2561. doi.org/10.1145/3643834.3660701

- José Manuel Vega-Cebrián, Andreas Lindegren, Mafalda Gamboa, Ana Tajadura-Jiménez, Ylva Fernaeus, and Elena Márquez Segura. 2025. Embodied Ideation, Toolkits, and Sketching. In Proceedings of the Nineteenth International Conference on Tangible, Embedded, and Embodied Interaction (TEI ’25), March 04, 2025. Association for Computing Machinery, New York, NY, USA, 1–6. doi.org/10.1145/3689050.3708393

- Carlos Cortés, José Manuel Vega-Cebrián, Pablo Pérez, María Nava-Ruiz, Narciso García, and Elena Márquez-Segura. 2025. Embodied design for inclusive XR learning system. In 2025 17th International Conference on Quality of Multimedia Experience (QoMEX), September 2025. 1–4. doi.org/10.1109/QoMEX65720.2025.11219895

- Annika Waern, Lars Elbæk, Robby van Delden, José María Font Fernandez, Perttu Hämäläinen, Maximus D Kaos, Elena Márquez Segura, Maria Normark, Dees Postma, Dennis Reidsma, Lærke Schjødt Rasmussen, Ana Tajadura-Jiménez, Laia Turmo Vidal, José Manuel Vega-Cebrián, and Rasmus Vestergaard Andersen. 2026. Moving with method: using cards in movement-based design. Interact Comput 38, 2 (March 2026), 214–234. doi.org/10.1093/iwc/iwaf006

- Elena Márquez Segura, Inés Fernández Vallejo, Karunya Srinivasan, Marte Roel Lesur, Amar D’Adamo, Joaquín R. Díaz Durán, José Manuel Vega-Cebrián, and Ana Tajadura-Jiménez. 2026. i_mBODY Lab Demonstrates: Body Transformation Experiences with Multisensory Wearables. In Extended Abstracts of the 2026 CHI Conference on Human Factors in Computing Systems (CHI EA ’26), April 13–17, 2026, Barcelona, Spain. ACM, New York, NY, USA, 7 pages. doi.org/10.1145/3772363.3799377

Preface

Acknowledgements

I’m much grateful.

1 Introduction

how can we design multisensory wearables to support physical rehabilitation

- cuáles son los design resources cuando se diseña para movement-based interaction. (se abre el scope. Cuáles son relevantes para el campo en específico)

- cómo sería un toolkit para diseñar. cuáles son relevantes para el campo en específico

- scope del caso concreto.

- Cómo se extrapolaría a otros contextos (vale para otros contextos?)

This is an Interaction Design project using movement-based design methods, involving multisensory feedback in response to movement, and implementing design concepts in minimalist technologies.

In this thesis, my main contributions are:

- An actionable overview of movement-based design methods, focused on the design resources of movement, space and objects.

- A minimalist toolkit of multisensory feedback probes, with a demonstrated potential in supporting ideation for movement-based interactive technologies.

- A nuanced account of a multi-stakeholder design journey within the medical context of peripheral nerve transfer surgery, along with insights and recommendations that could be extrapolated to other similar design processes.

- A set of smartwatch-based prototypes that originated from such design journey and have also a demonstrated potential in supporting other physical rehabilitation and movement domains.

- A discussion on how these design methods, tools, processes and outcomes are intertwined and can support further developments in interaction design for movement applications.

In this thesis, I present how:

- Movement-based design methods are an appropriate way to design technologies for movement applications, including physical rehabilitation.

- Engaging with the stakeholders of a movement-based technology through movement-based design activities provides rich and meaningful insights for design that would be missing when only engaging verbally (or not engaging at all.)

- Minimal interactive technologies, using multisensory feedback and built with off-the-shelf development platforms, can effectively support movement-based activities such as physical rehabilitation and training in their intended application domain and beyond.

- Nuanced accounts of design processes of interactive technologies, moving beyond narratives of success, are needed to better illuminate how and what to (not) design.

1.1 Context

Research projects: MeCaMInD, MovIntPlayLab, BODYinTRANSIT.

initial objectives

- To design playful and transformational multisensory interactive rehabilitation experiences featuring wearables in the context of physical rehabilitation in real-life contexts.

- To investigate the effects and potential changes in perception and action of the designed interactive experiences.

- To explore, apply, and iterate traditional and innovative embodied design methods: to better understand the design context and target user, and to generate, explore and evaluate innovative design ideas and prototypes.

- To create an embodied co-creation design kit with a set of tools that facilitate sessions with the target population and experts in relevant domains (e.g. design, rehabilitation, etc.)

- To extract intermediate-level design knowledge in the form of design guidelines, patterns, and recommendations for the design of playful and transformational multisensory interactive rehabilitation experiences featuring wearables.

TODO(further context) Because physical rehabilitation is a broad application domain, for this work, it was necessary to focus the scope on a single case. The research projects that funded my work had already instigated a collaboration with the Peripheral Nerve Unit of Hospital Universitario de Getafe, in Madrid, Spain. I joined such work to research how we could design playful wearables that could support the rehabilitation of patients who had undergone peripheral nerve transfer surgery. In this type of surgery, surgeons reconnect nerves to bypass or replace damages nerves. In such cases, the rehabilitation involves not only recovering the mechanics of movements but also their neurological foundations. For the technologies that would support this kind of rehabilitation, I was interested in taking into account the perspectives and needs of patients and medical personnel. This led to a complex co-design process resulting in rich insights and several prototypes that I developed based on them.

1.2 Positionality

first-person perspective [73].

1.3 Collaborators

During this thesis project I collaborated with several people. Here, I indicate the acronyms that I use to refer to them throughout the text, when I indicate the roles they performed.

EMS: Elena Márquez Segura, thesis advisor and tutor.ATJ: Ana Tajadura-Jiménez, thesis advisor.LTV: Laia Turmo Vidal, postdoctoral researcher.MRL: Marte Roel Lesur, postdoctoral researcher.JLF: Judith Ley-Flores, postdoctoral researcher.JDD: Joaquín R. Díaz Durán, research engineer.TBC: Tomás Bonino Covas, movement coach and physiotherapist.FA: Fernanda Alarcón, rehabilitation doctor.AMM: Andrés Maldonado, plastic surgeon.LC: Lara Cristóbal, plastic surgeon.OVH: Omar Valdiviezo-Hernández, doctoral researcher.KS: Karunya Srinivasan, doctoral researcher.RCR: Roberto Cuervo Rosillo, doctoral researcher.

1.4 Thesis Structure

Chapters 2 and 3 constitute the context for this work: Within them, I introduce foundational concepts that situate and describe the kind of design research that I delved into during this thesis, mostly based on embodied interaction, multisensory feedback and Research through Design. In Chapter 2, I provide an overview of the theoretical concepts of action, perception and movement learning that inform this work. Additionally, I survey related work in HCI research along three different axes: movement-based design methods, embodied ideation toolkits, and the design of interactive technologies for rehabilitation. These axes mirror the areas where this thesis mostly contributes to. Chapter 3 describes the main principles behind the methodology used during the work, Embodied Sketching and Research through Design, along with the design drives that characterised it: minimalism, open-endedness and generalisability.

Methods and tools: Chapter 4. Chapter 5.

Process and Prototypes: Chapter 6. Chapter 7.

Finally, in Chapter 8, I attemp to tie all the work together.

2 Background

This chapter draws on publications A, B, C, and D [189–192].

In this chapter,

2.1 Body, Perception and Action

TODO(general context)

TODO(“activity”)

2.1.1 Motor Learning

TODO(rework this. Add implicit vs explicit motor learning) The focus of attention refers to the location to which a person pays attention while performing a certain movement [116]. An external focus of attention consists of directing the learner’s focus to the effects of their movements on the environment, such as to an apparatus or implement [207]. In contrast, an internal focus of attention consists of concentrating on the inside of the body while performing a movement [116]. Existing studies on attentional focus have generally recognised the benefits of adopting an external focus over an internal focus in motor learning and performance in a variety of practices such as golf [89], tennis [206], standing long jump [205], swimming [163], jump height [1], throwing [210], and striking combat sports [65].

2.1.2 Intercorporeal Biofeedback

My thesis work is influenced by the strong concept [75] of Intercorporeal biofeedback [183], which proposes the role of interactive technology as a mediator that can support joint sense making on body processes by different actors [183]. TODO(a strong concept is…).

Articulated through design work focused on practices of movement teaching and learning, the Intercorporeal Biofeedback concept presents a way to design biofeedback technology to achieve such a role. It proposes four core characteristics that have served as guideposts for the technologies I designed for this thesis. First, an intercorporeal biofeedback tool should provide a shared frame of reference so that the biofeedback is accessible—through using e.g. audiovisual or visuotactile and not only vibrotactile feedback—to different people at the same time. This helps create a frame that involved parties can refer to in their sensemaking processes. Secondly, such a tool should support a fluid meaning allocation, i.e. supporting in-the-moment constructive meaning-making by people by favouring open-endedness [186] in the feedback representations. Thirdly, it should support guiding attention and action, enabling a focus of attention fluctuation from the body to the biofeedback, their tight loops, or the instructions provided by observing peers [183]. Finally, it should be designed as an interwoven interactional resource to be used alongside a wider variety of interaction resources—such as verbal instructions, demonstrations, and material equipment [183]—, so that the technology does not become the sole focus of the interaction. TODO(maybe develop more?)

2.2 Movement-based Design Methods

Given TODO(the renewed importance of the body in action / HCI waves)… Movement-based Design Methods haven been proposed and used by the Interaction Design and HCI communities. In the following, I briefly present methods and strategies that have been historically relevant to the trajectory of movement-based design research in HCI.

First of all, Bodystorming is a situated generative design method focused on generating multiple design ideas. In contrast to brainstorming, bodystorming uses full-body engagement with objects, the space and other people to come up with ideas. There have been several proposals regarding bodystorming, exemplifying how movement-based design methods are often appropriated, adapted and tweaked to fit a specific design agenda and design process. For instance, in 2003, [129] focused on carrying out ideation sessions in the very context in which designs will be used. Later, in 2010, [146] articulated bodystorming as three different approaches: prototyping using enactment; physically emulating the spatial environment in which technology will be used to generate/evaluate ideas in context; and employing actors and props to play out expected use case scenarios.

More recently, [107] advanced bodystorming for movement-based interaction as a generative strategy to develop ideas from scratch, emphasizing its playful and participatory components. Later on, [184] introduced Sensory Bodystorming, which bridges bodystorming and material ideation approaches. This method uses non-digital materials and objects with different sensory qualities to foster exploration and ideation of sensing/actuating possibilities. Finally, [200] proposed Performative Prototyping, which combines bodystorming methods and Wizard of Oz techniques with a puppeteering approach in collaborative mixed-reality environments. With this approach, they claim to leverage both somaesthetic and dramaturgical perspectives, the former conceived as a point of view from the inside out and the latter from the outside in.

[145] contended the importance of somatic facilitation during a technological design process and named it the practice of Somatic Connoisseurship. The careful and trained focus on the lived experiences in the body can enrich the design space in Interaction Design and HCI [145].

Relatedly, Soma Design [72–74,178] refers to a design process that is holistic and builds upon the ideas of Somaesthetics [148,149]. It connects sensations, feelings, emotions, and subjectivity in participants’ bodies and aims to examine and improve them. These frameworks emphasize introspection, slowness, increased awareness, and the use of sensitizing and body maps.

On a similar note, Embodied Sketching [108] encompasses movement-based ideation practices that harness a combination of physical engagement in the surrounding context with play and playfulness to elicit a creative mindset. This context includes the social and spatial settings along with digital and non-digital artefacts, which are catalyzers of engagement and idea generation.

Estrangement, which refers to the process of turning “the familiar” upside-down and making it unfamiliar, is also a common resource and an important component of Soma Design TODO(confirm references) [72–74,178]. [201] analyzed the use of estrangement as a powerful approach in embodied design methods. Estrangement can be used to inspect and experiment with already-known practices, movements and actions, causing a disruption that makes the familiar tangible or visible. Estrangement can be used to arrive at new kinds of movements, objects or design concepts [201].

In the same line, with Moving and Making Strange, [97] centred bodies and movement in the design process using a choreographic approach. The work foregrounded the use of choreographic strategies—such as explorations of variations of movement qualities including speed and direction—as possible ways to defamiliarize everyday movements and arrive at interesting interaction possibilities. The first-person perspective of the mover was their emphasis, alongside the third-person perspectives of the observer and machine. Relatedly, [17] also contended the use of estrangement to open design spaces, specifically in the context of the design of home appliances.

Role-playing as a method involves deliberately assuming a character role and playing out a more or less defined scene or script, with or without props [150]. It can be used throughout the whole design process: to discover and identify issues to solve; to observe and understand the design context and target users; to generate new ideas; to evaluate them; and to communicate them. Informances [27,150] are an example of role-playing which combine performance, scenario-based design, and Wizard of Oz to simulate and improvise future generative-oriented situations with future technology. In Informances, simple props are often used to simulate and recreate the technology and key contextual elements of the scenario. A more elaborate form of role-play is Larping—Live Action Role Playing—, which involves complex and well-crafted simulations, character descriptions, narrations and strategies for representation [104]. Larping has the potential to cultivate deeper connections between participants and their characters and can be used as a sensitizing activity or as a stage for testing and evaluating design concepts and prototypes [104].

Other methods that are used as references and inspiration are Service Walkthrough [24] and Interaction Relabelling [45]. Even though these were not originally proposed as movement-based design methods per se, they similarly entail physical engagement with artefacts and the environment. Additionally, they are cited as relevant methods by others [17,97]. Service Walkthrough [24] is a design technique that facilitates and guides the physical representation and enactment of service moments or stages to prototype/evaluate them. While the entire service journey is walked through, feedback can be gathered as a whole process or in each journey moment/stage. Interaction Relabelling [45] supports the ideation process of novel forms of interaction with electronic devices by asking to use an existing product simulating to be the intended design. Interactions are mapped and evaluated. When the products are quite different to the intended designs, they may lead to creative ideas/concepts.

Finally, a common physical resource employed in movement-based design methods is paper cards. These are used to provide descriptions and instructions [119], to aid in ideation/reflection [175], as a documentation tool of design constructs in workshops [144,186], or as rule facilitators of body play [114]. TODO(mecamind reference. In fact…)

2.2.1 Classifications of Movement-based Design Methods

As part of this thesis, in Chapter 4, I describe a characterisation endeavour which had the objective of elucidating and mapping common features of movement-based design methods, to aid in their understanding, usage and reappropriation. In the following, I present previous works that have similarly addressed the need for a comprehensive framework to understand, describe and appropriate such design methods.

To approach the analysis of embodied design methods, a couple of works have focused on a single yet powerful dimension as their starting point. For instance, [201] proposed and used a framework to analyze embodied design ideation methods with a focus on estrangement. In the work, they interrogated: (1) What is being done to cause a disruption; (2) What is destabilized by this disruption; (3) What emerges from the process, and (4) What is embodied, e.g. made tangible or visible from doing it [201]. This framework was used to analyze eight embodied design methods.

Alternatively, [97] focused on the first-person perspective of the person in movement. From there, they proposed a design methodology based on a whole set of choreographic tools, and grounded in prior interactive design projects from the same authors.

In contrast to these two works, the analysis I present in Chapter 4 followed a bottom-up approach to map the characteristics of a larger corpus of movement-based design methods. With this approach, the objective was to obtain a set of general categories that would allow to describe the elements in play for the implementation of these methods in practice.

In another work, [5] analysed 23 methods in seven articles and constructed a typology for movement-based design methods, based on the following seven foci: (1) Sensing; (2) a Playful approach; (3) an Experimental approach; (4) Props, Artifacts and Technology; (5) Enactment; (6) Social Interaction; and (7) Specific Context. Simultaneously, they classified the methods regarding the design stage in which they were used: Divergent, Explorative or Convergentstage.

I would argue that a limitation of this approach is that the methods are pigeonholed to a specific focus when they could be present in more than one. Thus, it can be difficult to see how the seven found dimensions relate to each other. Additionally, there is not a clear path to use the classification to implement one’s methods. In contrast, in the work I present in Chapter 4, the categories are not exclusive and therefore reflect several methods at the same time. Further, there were made actionable by providing recommendations and considerations for the reader.

2.3 Embodied Ideation Toolkits

In this thesis, in Chapter 5, I present the design process of a minimalist toolkit that could support embodied sketching [108] focused on applications of multisensory feedback in response to movement. In the following, I describe related embodied ideation toolkits that have been designed and employed in the HCI community, given that movement-based design research has often explored, produced and employed toolkits and probes to facilitate design sensitisation, exploration and ideation.

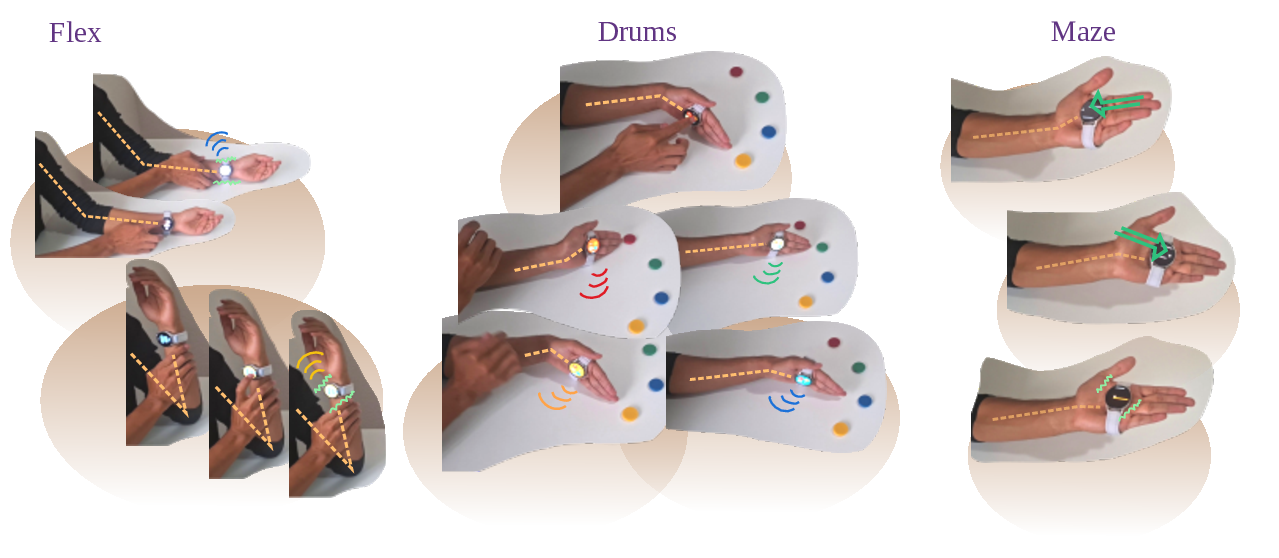

First of all, I wish to highlight some foundational works. Here I include the concept of technology probes: simple, flexible and adaptable technologies designed to inspire users and researchers to ideate new technologies [80]. The Inspirational bits [165] consisted of multiple units that exposed the workings of common technologies and input modalities. The Embodied Ideation Toolkit [79,153] involved the the curation, design and use of multiple tangible objects to support embodied co-design processes with the participation of diverse stakeholders. TODO(expand more on these?)

Similarly, my work draws from toolkits and probes often employed in Soma Design [72,74] processes, such as the Soma Bits [202,203]. The Soma Bits were introduced as a kit of objects that allow exploring haptic modalities—vibration, heat, and inflatables—at varied levels of intensity and in different parts of the body [202,203]. The kit combines the Soma Bits—the devices consisting of electronic actuators, control units, knobs and power—with the Soma Shapes—soft and diverse objects with pockets to place the Bits [202,203]. Related to the Soma Bits, but not developed as a toolkit per se, the Felt Sense Glove [125,126] and Sense Pouch [125] were ideation probes that supported exploration of the effects of heat and vibration in people’s somatic experiences. In a similar line, but intended as an open-ended prototyping toolkit to design wearable menstrual technologies for young adolescents, the Menarche Bits [154,155] consisted of custom shape-changing actuators and heat pads.

Another line of research into toolkits concerns designing individual modules that can be interconnected and used to explore and prototype wearables and e-textiles. For example, the Wearable Bits [83] were a modular set of patches of different levels of fidelity with common electronic components—sensors and actuators—that could be arranged according to one’s design and prototype. The Kit-of-No-Parts approach [132] consists of handcrafting textile interfaces—such as tilt, pressure or stroke sensors—from scratch so that one can personalize, understand and share them.

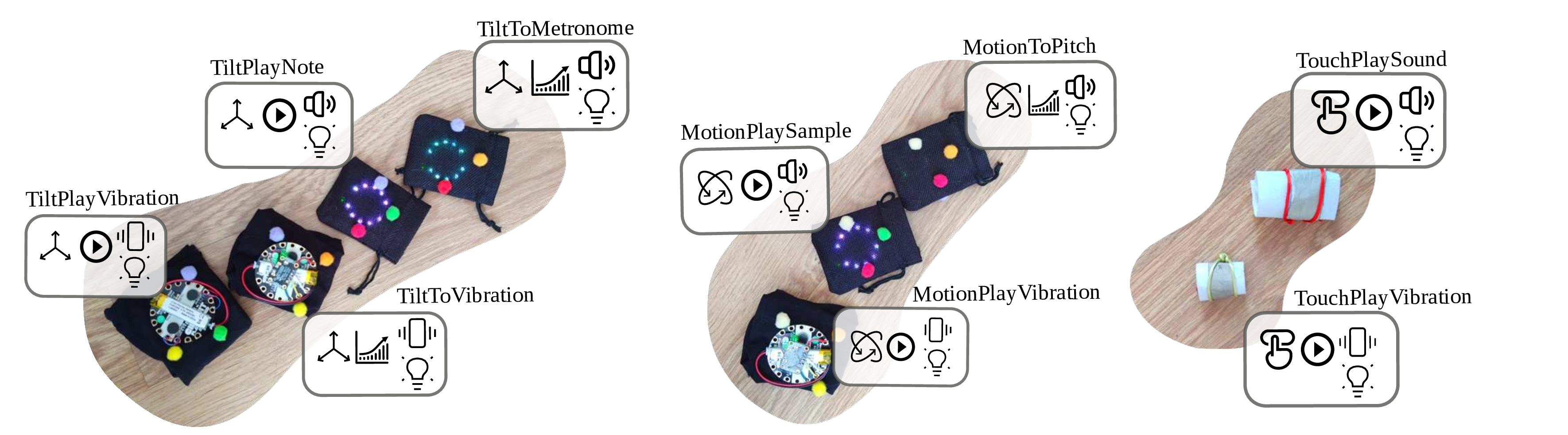

The DanceBits were developed as a wearable prototyping kit for dance that was co-developed with a justice-oriented, computing and dance education organization [41]. The DanceBits provided several input components, such as buttons and tilt sensors, and output components, such as different types of lights, that could be easily interconnected to design and perform choreographies while wearing electronic costumes [41]. Focusing on haptic feedback, the TactorBots [211] consisted of a toolkit of multiple wearable units where each one provided a different type of touch gesture. The touch gestures implemented in these units arose from an analysis of prior work, similar to the process we follow in this paper. The design process of the TactorBots resulted in a comprehensive toolkit which could render all touch types, could be worn in any place of the body, and could be used in the wild [211]. From all these kits I took inspiration in how they identified and built minimal modules with a single function each.

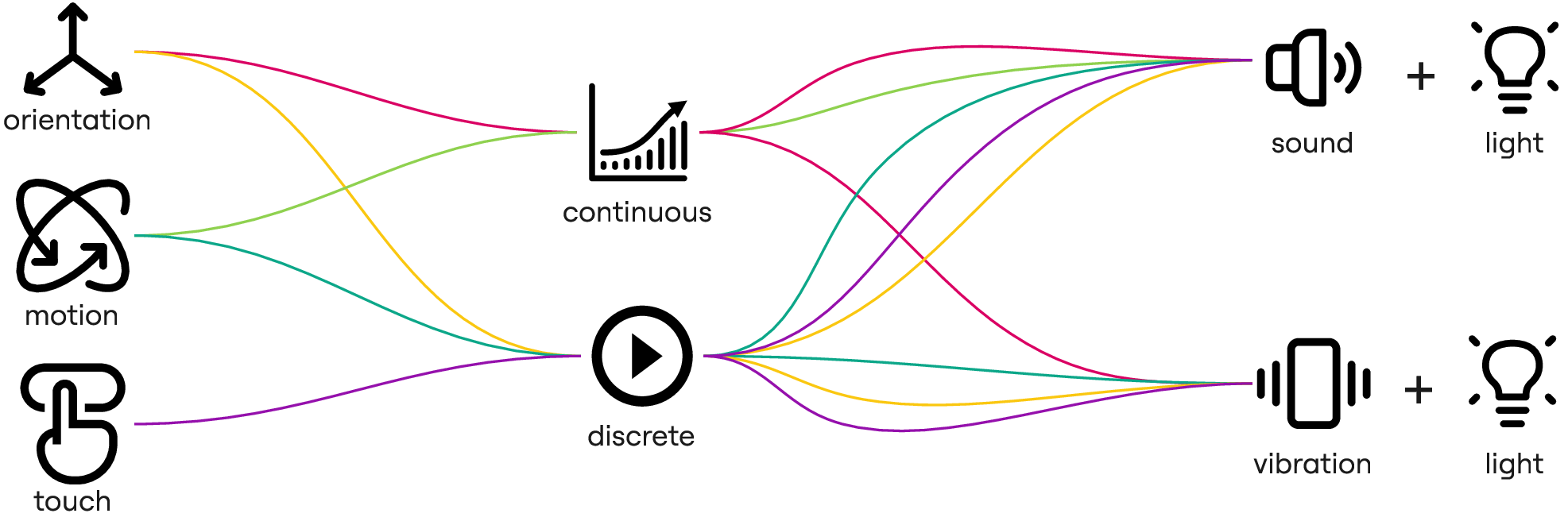

More directly related to my work in movement feedback are the Training Technology Probes (TTPs) [105,106,182]. These were a collection of simple wearable devices that sensed a few body parameters, such as movement speed, body orientation or breathing, and provided feedback loops through different sensory modalities—e.g. lights, sound and vibration mapped to orientation or motion [105,106,182]. They emerged from diverse embodied sketching activities [108,184]. While they were not specifically designed as an ideation toolkit themselves, they lent themselves to be appropriated, iterated, and re-designed to be used in diverse contexts related to motor learning and training. For instance, the TTPs have been used in a multitude of projects relating to motor learning in circus training, yoga, weightlifting and physical training in general [105,106,180–182,186]. This was due to key properties of the TTPs, such as their simplicity, open-ended feedback and use of redundant multisensory feedback for the wearer and others, all of which we take as inspiration for our toolkit.

My work draws inspiration from these prior toolkits and probes in different ways. For instance, this work shares with the Soma Bits [202,203], Menarche Bits [154,155], Wearable Bits [83] and TTPs [105,106,182] the values of minimalism and an integral understanding of embodied experiences, where technology is not the sole focus. The insights from studies with the TTPs [105,106,182] comprised the empirical grounding of the Intercorporeal Biofeedback strong concept [183], which I used as an analytical lens in my work. Additionally, I took from the Embodied Ideation Toolkit [79,153] and the description of bodystorming baskets [191] the approach of bringing a variety of small, readily available probes to help stakeholders engage in embodied design activities. Finally, my work shares with the Wearable Bits [83], Soma Bits [202,203], TactorBots [211], Kit-of-No-Parts [132] and DanceBits [41] a design approach based on individual modules with identifiable functions, which can be adapted to different situations.

2.4 Interactive Technologies for Rehabilitation

TODO(intro, connect with Chapter 6)

In this section I focus on designs and design processes supporting neurorehabilitation, especially of conditions affecting the upper limbs. I focus on conditions that share needs and characteristics with the rehabilitation of peripheral nerve transfer surgery, such as stroke, spinal cord injury, or chronic pain.

In the Health domain, Tangible User Interfaces have been employed for purposes such as promoting health, facilitating diagnosis, facilitating or improving the rehabilitation process, improving everyday life or practitioner work, and providing mental and social support [20]. Furthermore, rehabilitation is the second most targeted medical field in tangible design, which has been proven useful to facilitate or improve the rehabilitation process [20].

Several types of tangible and interactive technologies have been designed and researched to support physical rehabilitation processes [20]. Many examples have combined these technologies with playful or game-like features to create Exertion Games (Exergames) for rehabilitation. For instance, some projects have focused on the affordances of Virtual Reality (VR) or Mixed Reality for this purpose. Exergames have been developed to support the rehabilitation of Spinal Cord Injury [130], stroke [14,90], or upper Limb rehabilitation in general [15,55,208]. [30], interviewed several physical therapists after playtesting a commercial VR game to evaluate the potential of these technologies to support physical therapy, providing a positive outlook on the possibilities of such games. Some of these works have involved an interaction not only with the VR system but also with robotic devices (arms or exoskeletons) [55,208] or with custom wearable sensors [90,91].

Relatedly, there is a strand of work that has focused on employing external computer vision sensors such as the Kinect or Leap Motion to estimate the position of the limbs and use them to act upon the games or experiences [49,53,54,138,142]. These technologies free the patient from wearing anything but involve setting up a place with a specific configuration to be able to leverage the sensing that they provide. All these Exergame works have indicated advantages of employing game-related features to support rehabilitation by promoting engagement and providing further anchor points for motivation.

Regarding wearable devices, there is a line of work developing, testing and exploring custom e-textiles-based sensors for joint position estimation. For example, in the works of SeamSleeve [139], E-Serging [81] and ReKnit-Care [77] the researchers investigated several possibilities for embedding conductive seams within garments for motion detection. These e-textiles sensors and actuators remove the need for wearable integrated circuits or external computer vision sensors, and are promising for physical rehabilitation applications. They allow for completely custom-fit designs, which would also allow personalisation for the wearer needs. However, they tend to require specialized equipment that could make them challenging to replicate.

2.4.1 Applications of Multisensory Feedback

Sensory feedback on movement has been increasingly used in the context of physical activity and physical rehabilitation to motivate, inform and guide people. For instance, real-time auditory feedback can provide additional information on movement—such as movement trajectories or qualities—, to aid in movement execution, control and sensorimotor learning [22,143] or to support the reacquisition of lost motor capabilities [194], such as those following strokes [147,198]. Sensory feedback on movement can further address the underlying psychological barriers or needs that prevent people from engaging in physical activity or rehabilitation [134]. For example, in the Go-with-the-flow project [152], movement sonification provided information about the movement angle, start or end to help people build confidence in physical activity despite chronic pain [152].

Haptic feedback represents a flexible channel for conveying information, with vibration emerging as the most frequently used modality in this space TODO(elaborate on and separate all these references) [9,18,21,67,105,156,157,167,174,204]. Additional forms of haptic input used to support body awareness include thermal cues [36,84] and pressure-based feedback [85,184]. Vibratory cues are often applied to prompt posture correction [18,204] or to deliver instructional signals during movement [105,156,157], and they can also be designed to evoke a range of bodily sensations. For example, metaphor-driven vibration patterns have been shown to elicit altered perceptions related to body shape, posture, size, or weight [171], or to modulate bodily experience in ways that promote body awareness and greater engagement in physical activity for inactive populations[94]. Such metaphorical cues can further support movement quality—for instance, by creating sensations of being “pulled” upward or “pushed” downward during squat exercises, which may help guide proper execution [151].

A recent line of research has also explored the potential of altered feedback—modifying the perceived body or body movement instead of providing accurate movement information—to address psychological factors related to physical activity and rehabilitation. This approach can, for instance, create the perception of a lighter and more capable body [170] TODO(amar references), evoke sensations of being “pushed” by sound or vibrotactile feedback [151], or alter the perceived weight of a body part to influence movement execution, such as reducing gait asymmetry in chronic stroke patients [62]. Employing real-time sensory feedback to movement can be a powerful design material to better support rehabilitation processes.

2.4.2 Smartwatches and Mobile Devices

To address the feasibility of deploying and evaluating designs resulting from research into-the-wild, there have been some works reappropriating and employing readily-available wearable technologies, such as commodity smartwatches or mobile phones. These devices provide an assortment of available sensors such as accelerometers, gyroscopes, magnetometers, heart rate sensors, cameras and more, along with processing capabilities to extract and analyse movement data. For example, the aforementioned Go-with-the-flow project [152] used a mobile phone worn in a belt to provide real-time feedback for chronic pain patients based on motion sensor data. Also using a mobile phone, [172] leveraged the camera in the device to perform computer vision-based motion tracking to provide gamified feedback.

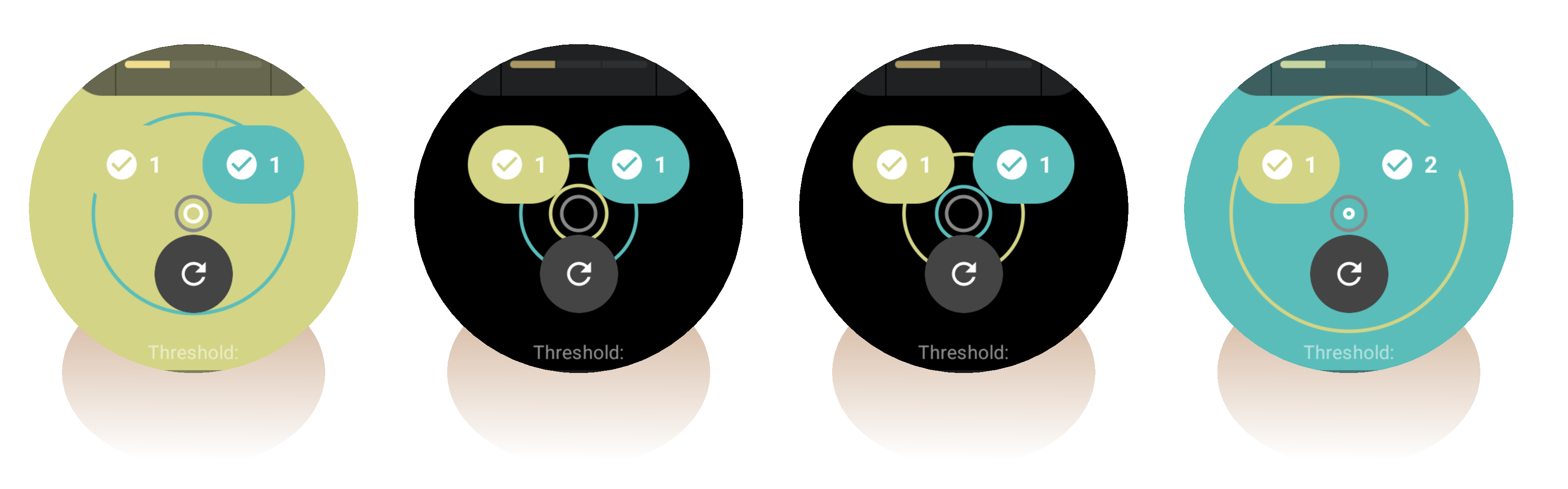

Nowadays, most modern smartwatches are geared towards physical activity and exercise uses. For example, they track activity and rest during the day, and can detect and provide detailed data for specific types of sports and exercises, including strength training movements, although not necessarily for the purposes of rehabilitation. Given their compact form factor and their compatibility with custom fabric straps that extend their wearability, these devices can become a very appropriate platform for exploring and prototyping wearable interactions, especially because some of these devices allow for the development and installation of custom software (apps)—the main ones being those based on Wear OS by Google and watchOS by Apple. By developing for these platforms, one can leverage the already-existing hardware and operating system to focus on prototyping the interactions. For me, this was especially relevant because custom hardware tends to be more expensive to create, control, modify, maintain and distribute [68], and in several contexts where resources are scarce—financially, time- or labour-wise—there is a need to consider how to design technologies within limits [37]. TODO(expand on the LIMITS angle, from demo paper)

Based on the capabilities of programmable smartwatches, several works have explored how to use them to support physical rehabilitation. For instance, some works [60,141] have investigated the potential of smartwatches for the detection and evaluation of rehabilitation movements, with promising results regarding their level of accuracy. [60], analysed 43 works employing smartwatches and leveraging their in-device accelerometers, gyroscopes and magnetometers as inputs for different detection algorithms to achieve gesture recognition with high precision.

For the case of arm rehabilitation, [141] focused on detecting and providing feedback for the quality factors of rehabilitation exercises, such as missed repetitions and duration. In a similar line, to support real-time movement feedback for upper limb rehabilitation, some works have taken advantage of the pairing between smartwatch and mobile phone, using the former as a sensor array and controller and the latter as the data visualizer [28,113], game screen [29], or as another sensor array [43]. Others have studied the effects of providing feedback on activity levels across time in rehabilitation, showing promising possibilities and results [47,177]. Most of these works focus on the movement detection algorithms or the effects of the feedback on the patients, both in real-time or during longer periods.

2.4.2.1 Critical Perspectives to Design Smartwatch Applications

It is worth considering that, despite their ubiquity, there are several design decisions behind commercially available smartwatches and fitness trackers that prior work has problematised, as these tend to have a normative and healthist approach to activity tracking [159]. First of all, an argument can be made regarding how these devices extend a line of thinking that emphasises an “individual responsibility for health, well-being, and self-knowledge” [46] instead of providing or cultivating a more suitable social support system. In this sense, there is an assumption that tracking more data is better [120] because it would help the wearers to get a better understanding of what they do and how to change it [120].

Additionally, many of these devices demonstrate a level of opacity [209] in the measures they present to the wearer, such as number of steps [159,209] o stress scores [19], which might not always match the experience of the wearer [209]. This becomes more problematic when those measures are reductively taken as signs of fitness and health—more steps and less stress are better—without accounting for the real context and needs of the wearer [19,159,209]. For the case of activity tracking (e.g. in sports), there might be a mismatch between the data that are captured and that which would provide a better understanding of such activity for the wearer, such as “felt, emotional, and contextual aspects” [61] or broader temporal windows surrounding the activity [61].

When involving chronic conditions, tracking technologies could support more nuanced recordings of the activities and their context [61], while also considering that what the wearer might need to do is to limit their activity levels and balance exertion with rest [69–71]. To illustrate this point, [71] investigated how commercial fitness trackers were reappropriated and (mis)used for the purposes of pacing energy levels, identifying tensions between this and the intended use, while also demonstrating that the devices could technically support these alternative goals.

2.4.3 Designing Technologies for Rehabilitation

In this work, I take inspiration from several works describing co-design processes and discussing design insights for tangibles and wearables to support physical rehabilitation.

For instance, [100] presented design recommendations for tangible interaction projects for stroke rehabilitation, where they include considerations for holistic activities—e.g. taking into account the social context of the stroke survivor, purposeful goals, balance between rest and activity, personalization, and others. [87] described a co-design process where they created modular objects for interactive therapy at home, focusing on the affordances of their shapes and their relation to everyday activities. Similarly, [13] engaged in a participatory design project where they iterated personalised interactive devices to support a long-term engagement of the patients’ rehabilitation activities. These works involved stroke survivors as participants in their co-design activities, along with health personnel such as physiotherapists and occupational therapists, and family members in one case [13].

Beyond stroke, [96] involved people with upper limb disabilities to co-design gaming wearables, and highlighted how there is a broad line of research on wearables for rehabilitation and not so much for non-corrective uses, such as play. These works exemplify the importance of involving as co-designers the people who are directly affected and will use the proposed designs.

Other co-design projects have recruited and collaborated only with the health personnel experts in their application domain. For instance, [99] recruited clinicians and mindfulness experts and derived design principles for mindfulness-based embodied tangible interactions for at home rehabilitation of stroke patients, bringing forward a holistic approach based on the practice of mindfulness. Similarly, [32] carried out a couple of co-design workshops with occupational therapists to develop adaptive soft switches for youth with acquired brain injury. In the work by [182], minimalist technology probes were employed as design probes for the co-design of physical training activities for children with motor challenges. These works illustrate how involving the relevant domain experts can also be useful to derive rich guidelines and insights for the initial stages of a design that can be later evaluated by the patients who are intended to use it.

2.5 Chapter Takeaways

- external focus of attention and intercorporeal biofeedback as guiding concepts.

- A rich assortment of movement-based design methods.

- Some classifications. Could be more comprehensive or actionable.

- Multiple embodied ideation toolkits. A gap for multisensory feedback in response to movement.

- Several technologies for rehabilitation, and design processes. Possibility of engaging critically with the design of smartwatch-based apps. No applications for this application domain.

3 Methodological Considerations

This chapter draws on publication D [189].

3.1 Embodied Sketching

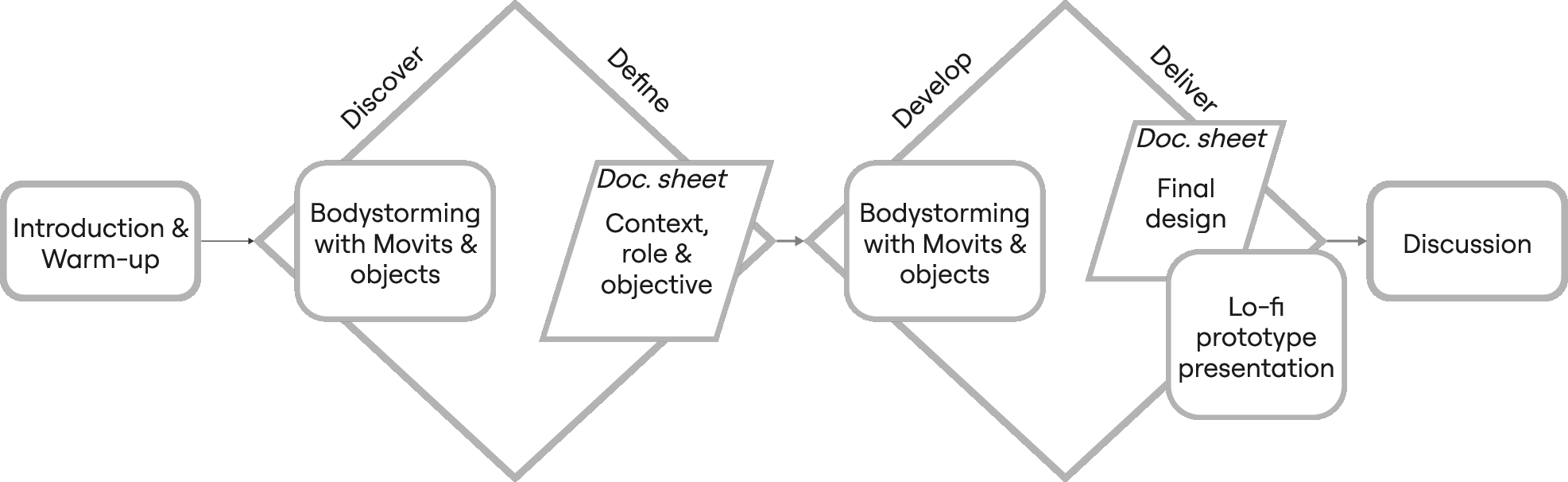

In the design activities described in this thesis, I employed a methodology based on Embodied Sketching [108]. Embodied sketching focuses on the design of technology-supported activities in a holistic way, involving the (co-)designer’s own body to come up, simulate, and examine the potential of design concepts before their actual development [108]. Embodied sketching involves three different stages: sensitising, bodystorming for ideation, and participatory embodied sketching.

3.1.1 Sensitising

Sensitising involves familiarising the designers and stakeholders with the relevant aspects to their mutual domains in order to provide a fertile ground for communication and ideation [108]. TODO(extend this… for example)

3.1.2 Bodystorming for Ideation

Bodystorming for ideation involves using the physical, social, and situated bodies to generate ideas [107,108]. Among various bodystorming techniques [107,129,146,184], I followed the one described by [107], targeting the design of playful physical and social action [107,108].

In this line, bodystorming [108] prescribes the following rules:

- “Show, don’t tell”, i.e. participants should use their bodies instead of words;

- Participants should take turns to propose an initial core action, which they then collectively explore and extend into a whole activity “worth doing”;

- Participants can make use of the available design resources [191], including their bodies, the bodystorming basket, and the surrounding space;

- Ideas are explored until “exhaustion,” i.e. until no more ideas or variations emerge.

TODO(extend this… for example… we used it in)

3.1.3 Participatory Embodied Sketching

Participatory embodied sketching [108] consists of an embodied exploration of already-existing technologies—such as exploratory prototypes—in order to generate ideas for further possible applications.

TODO(extend this… for example)

3.1.4 Bodystorming Baskets

Related to the methodology of embodied sketching is the usage of a bodystorming basket [191]: an assortment of objects to support quick crafting and prototyping, including: general-purpose crafting material; affixing systems (velcro, safety pins, etc.); general training tools (e.g. elastic bands) and some specific to hand and arm training (e.g. grip strengthening devices); everyday tools and accessories related to the hands and upper limbs, e.g. gloves. Such an assortment can also include a toolkit of technology probes for embodied sketching to support movement-based technological possibilities and incorporate interactivity in the resulting designs.

TODO(extend this… for example)

The concept of bodystorming basket was introduced in [191], a conference paper which serves as the basis of Chapter 4.

3.2 Research through Design

Throughout this thesis, I followed a Research through Design (RtD) approach: a mode of inquiry that uses the design practice and the design process as primary means of generating knowledge [52,57,213]. Research through Design integrates the act of designing as a central method of inquiry, and therefore it studies and reflects on the stages of design, prototyping, iteration, reflection, and engagement with stakeholders. In this sense, the implications of the design trajectory become a central contribution, and therefore serve beyond a path to produce an artifact that can then be evaluated.

Contributions in RtD can take multiple forms, including constructive, methodological, and conceptual or theoretical contributions [214]. Emerging conceptual knowledge in RtD should be seen as “provisional, contingent, and aspirational” [57]. This knowledge aspires to be “sometimes right” rather than “never wrong” [57]. Such a framing supports an understanding of RtD as exploratory and open-ended. In this sense, methodological contributions involve the methods and approaches developed during the design process. In this line, I carefully report the methods followed in the design processes of this thesis in an attempt to capture reflection-in-action TODO(ref schön).

In this thesis, I take inspiration from [128], who proposed a vocabulary of design events to “articulate temporalities in design research with further care, nuance, and generativity”, which can support building “narratives that emphasize knowledge created along the way, and relieve pressure from the ‘final’ artifact.” [128] This perspective justifies documenting design activities with stakeholders, conceptual pivots, and emerging insights as consequential components of the knowledge process, rather than treating them as background context.

3.2.1 Prototypes and Loose Ends

Regarding contributions in this thesis, I make a conscious choice to include initial and intermediate prototypes and preliminary design concepts as valuable elements in the evolution of the design inquiry. I take inspiration in how [63] highlighted the value of “loose ends,” i.e. the “successful samples that are not suitable to the main inquiry of the present design research process, but that under certain circumstances can become starting points for new investigation lines.” I commend their critique of conventional reporting approaches, that by focusing on functions and solutions, omit “failures” and “loose ends” to give way to a sucess narrative of the final outcomes [63].

Extending this view while reflecting on unreported prototypes, [173] highlighted the multifaceted functions which prototypes perform within RtD. They note that one of these functions involve “brokering relationships with participants and deconstructing opaque technologies” [173]. In my work, prototypes acted as mediators: they helped to establish and sustain engagement between designers and other stakeholders by providing tangible reference points for discussion, imagination, and critique. Through this mediation, the prototypes surfaced existing tensions and new perspectives that reoriented the design trajectory. Also, they supported “deconstructing opaque technologies” [173], making visible design aspects that would have otherwise been overlooked. Recognizing these functions underscores how prototypes actively generate knowledge throughout the design process, rather than serving solely as end results.

3.2.2 Messy Design Journeys

Inspired by previous works in RtD [40,58,76,128], in this thesis I embrace the complexity, nuances, uncertainty and temporality that characterized this design journey: I describe and engage with the findings and challenges at each one of the stages shaped and fed the next ones, but not necessarily in a straightforward or expected way. TODO(develop these references)

In this sense, rather than presenting a streamlined story of linear progress and success, I made an effort to reveal aspects of our design journey that show the challenges, limitations, unexplored routes (or loose ends [63]) and even “failures” [58,76] that shaped it.

3.3 Design Drives

TODO(complementing positionality). In the design journey of my thesis project, there were design drives that guided how I developed my prototypes: minimalism, open-endedness, and generalisability.

3.3.1 Minimalism

In general, with the prototypes I designed and developed, I was interested in exploring to what extent a minimalist design could support the kinds of rehabilitation goals in our application domain. I looked for simple setups that would not require much involvement from the users (designers, therapists, patients) to use them. For instance, I reasoned that this would, for example, accommodate the needs of the therapists and rehabilitation doctors, that usually do not have much time for the patients, let alone to set up a complex technological device.

To inform my perspective, I was inspired by the concept of minimal computing. Originating as a concept in the digital humanities, minimal computing refers to an approach that advocates for working within the context of constraints, questioning the narrative that equals innovation with a bigger scale or scope [136]. Minimal computing puts at the forefront the question of what is necessary and sufficient for a given context, and advocates for using the technologies that account for exactly that [136]. This approach echoes the perspectives on computing within limits and similar concepts [37,121], proposing the exploration of computer-supported well-being in human civilizations living within global ecological and material limits [121]. I contend that the consideration of constraints when designing new technologies is especially relevant in the current state of the world, where human-based activities have exceeded several planetary limits and therefore cannot be sustained [121]. Additionally, this approach is suitable in contexts where resources are scarce, financially, time- or labour-wise.

To implement my prototypes, I used commercially available devices (prototyping boards and off-the-shelf smartwatches) as development platforms because I saw in them available, small, flexible, and wearable platforms that could be subverted for our purposes. In this sense, I found guidance in works addressing the possible roles of Interaction Design within sustainability, for example arguing for the value of reusing devices [23] and of not creating new custom hardware [68]. Custom hardware tends to be more expensive to create, control, modify, maintain and distribute [68], so by using already-existing hardware—drawing inspiration from the concept of salvage computing [37]—, I was aiming at minimising such costs while keeping what was necessary and sufficient [136] for the task at hand.

In the prototypes that I introduce in this thesis, minimalism was present in different fronts: the number of devices in use and their size, the types of interactions, the user interface patterns, their physical dimensions, the degrees of configuration, the relatively low number of features, and the fact that the device were meant to be used during the activities only (and therefore not at all times.)

3.3.2 Open-endedness and Generalisability

Previous works on minimalist technology probes [105,106,180–183,186] have highlighted how they have been appropriate to complement activities within different movement domains, therefore reflecting a considerable degree of generalisability. TODO(expand open-endedness) In this line, I found in the strong concept of Intercorporeal biofeedback [183], which I describe above, an appropriate theoretical foundation for framing my minimalist designs. It proposes the role of interactive technology as a mediator that can support different actors making sense of motor learning processes together. Designs that follow this strong concept are open-ended, allow for a shared frame of reference using multisensory feedback, enable guiding the attention from and to the actions, and are considered as part of the whole activity [183].

3.4 Ethical Considerations and Data Management

In this work, several participants were involved in the design activities that served as the basis for the insights and requirements of the multiple designs I implemented. The participants signed an informed consent regarding the management of their personal data (video and voice) during the sessions they participated in. These personal data were handled according to the management plan that was in place for the corresponding research projects. TODO(provide details?) There was no monetary contribution for their participation.

The activities were approved by the Ethical Committees of the university. For the case of the study with patients described in Chapter 6, the Ethical Committee of Getafe University Hospital also approved the study protocol that was developed as part of this work.

The design activities described in this work were held in private rooms of the university or the hospital.

3.5 Chapter Takeaways

4 Design Resources in Movement-based Design

This chapter draws on publication A [191].

How can we design wearable technologies to support physical rehabilitation and training? One possible path lies in the use of movement-based design methods. As previously discussed, movement-based design methods have increasingly been adopted in several domains due to their capacity for providing early insights into the embodied experience of participating stakeholders [201]. They can be used in multiple phases of a design project, ranging from sensitising exercises to evaluation [108]. However, while some methods are known and documented, these are not always well-suited for the specific characteristics of a design project. One has to consider the requirements, goals, limitations and possibilities, context, available resources, and emerging contingencies; as well as when in the design process the methods may be used.

In this chapter, I introduce a characterisation work which aimed to map the features these methods have, identifying how they can be applied to different application domains. This work resulted from a collaboration with an international movement-based design consortium working together in the Method Cards for Movement-based Interaction Design (MeCaMInD) project supported by Erasmus+. As a consortium, we developed a comprehensive characterisation of movement-based design methods to guide designers in selecting, adapting or creating their methods. The goal was to identify salient characteristics of the methods that influence their applicability in different contexts. For this purpose, we were interested in collecting and analysing methods that design researchers use in a specific context. Some of these were adapted from previously-known methods and some others were created from scratch.

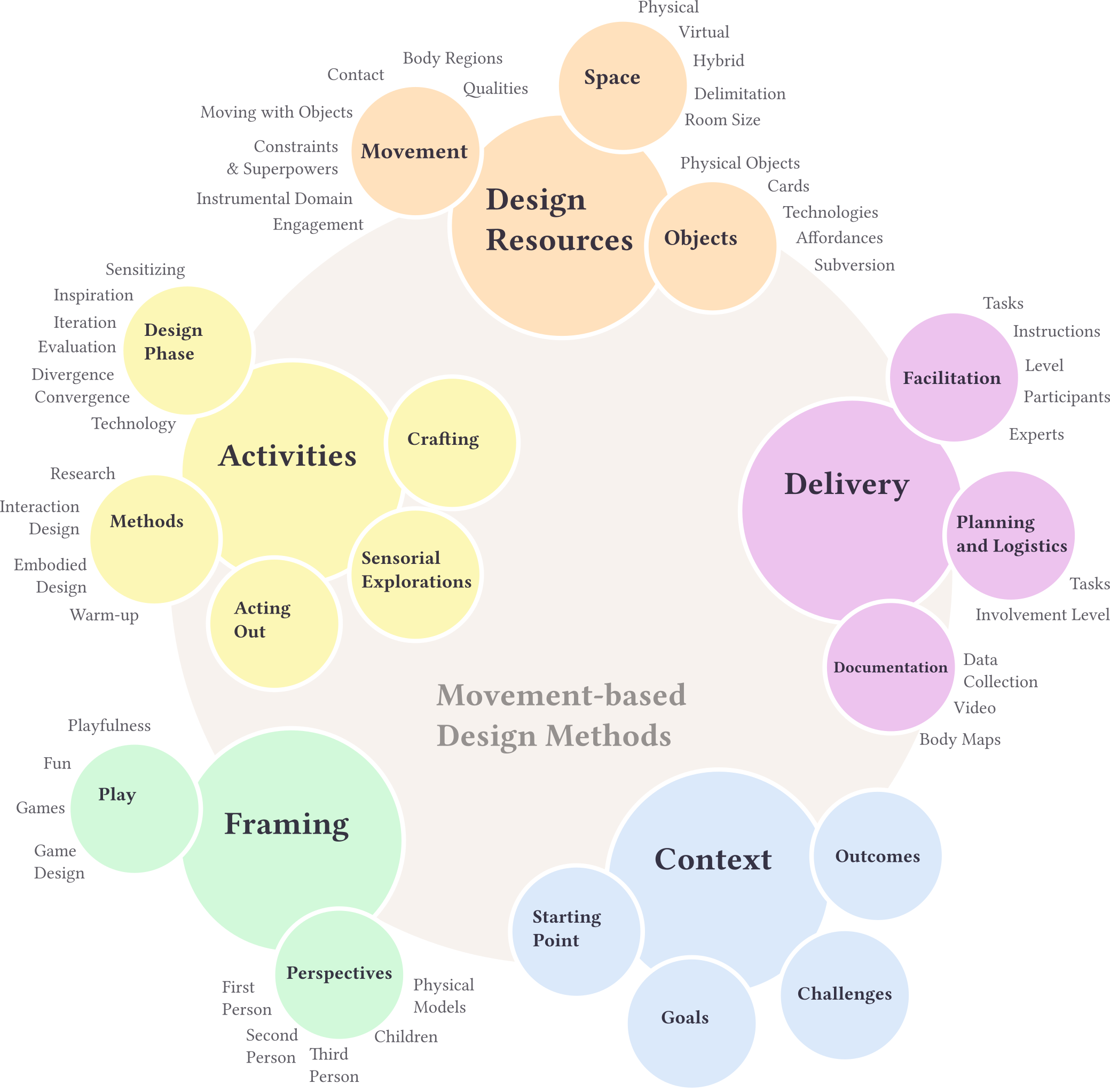

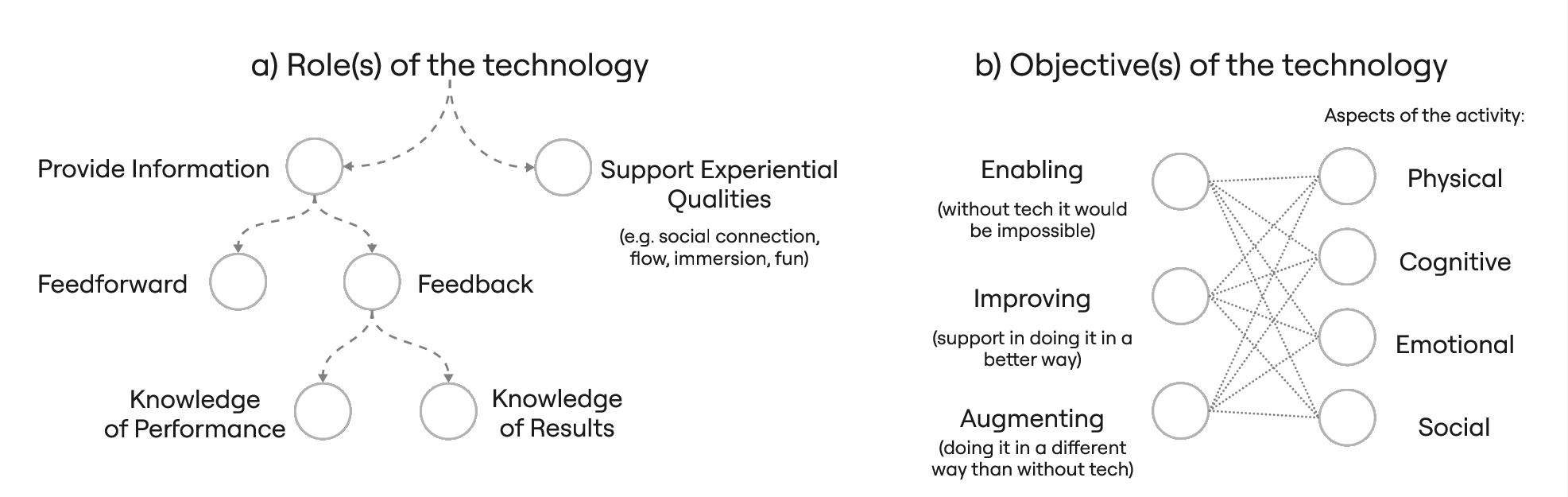

Through a series of workshops, we analyzed a total of 41 key movement-based design methods used in 12 interaction design projects from the consortium. We characterised and classified the methods using a comprehensive thematic analysis [25] with a bottom-up approach. We obtained 17 categories that encompassed the significant characteristics of our corpus. We arranged them into five main groups: Design Resources, Activities, Delivery, Framing, and Context (See Fig. 1.)

This work was a collaborative effort, so in some instances during

this chapter I use we to refer to what we did together. As the

main author, I participated in all the analysis along with

EMS, and I organised the materials and led the research

activities, coordinating the team. Note that to refer to the

collaborators I use the acronyms introduced

above. Based on the resulting analysis, I wrote most of the work and

created the corresponding figure.

In this chapter, I introduce the methodology we used for the analysis. Then, I present the core considerations related to each category and then focus the discussion on the Design Resources group. Finally, I provide action points and recommendations that ground the Design Resources with the practice of using movement-based design methods.

4.1 Characterisation of Movement-based Design Methods

In this work, I collaborated with design researchers from six institutions participating in the Method Cards for Movement-based Interaction Design (MeCaMInD) project, an international Erasmus+ project focused on movement-based design methods. They facilitated the interaction design projects that constituted the original data corpus, reporting on the movement-based design methods used for the different stages of their design processes. For each method, they reported its description, account of logistics and facilitation, benefits and outcomes, and reflections.

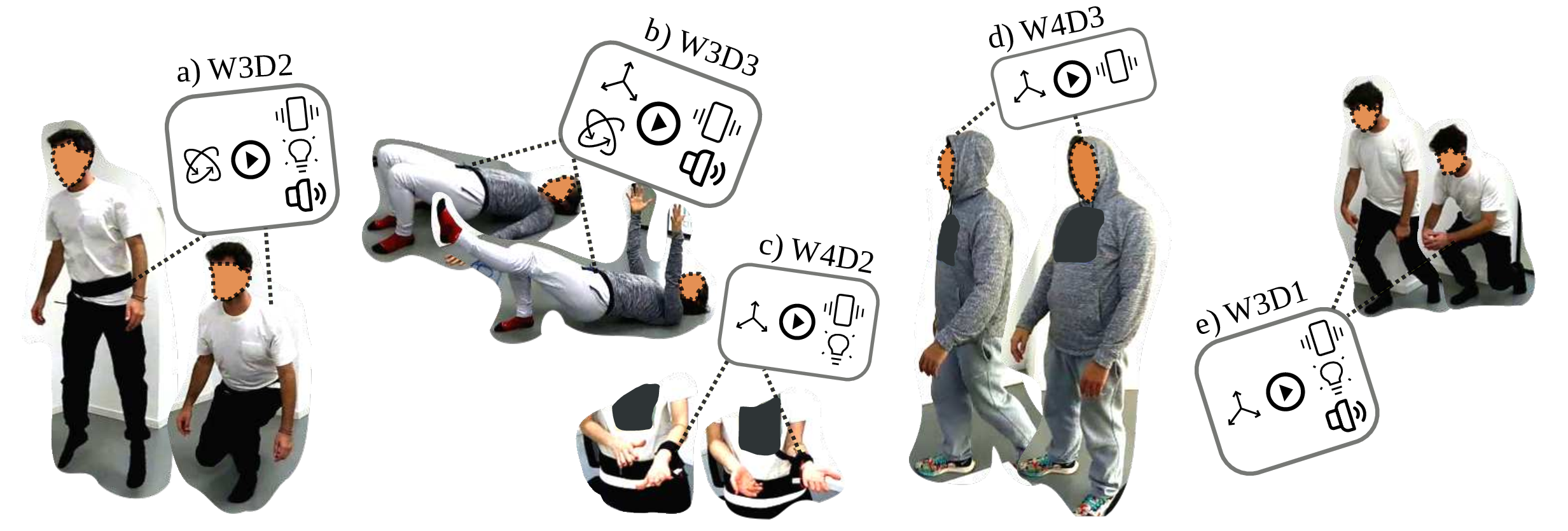

The following are the 12 interaction design projects that were reported, each one using between one and seven methods. From this, we collected a corpus of 41 descriptions of movement-based design methods (See Tbl. 1 for their names and IDs.)

ACHIEVE: Design of a playful interactive supermarket environment for children to foster a transition to healthier and more sustainable food consumption.KOMPAN Workshop: Concept ideation for outdoor fitness equipment for playful fitness training. Participation of students along with designers from the playground company.Astaire[107,108,212]: Design of a collocated MR dance game for two players: one inside and the other outside VR.Super Trouper[105–107,109,111,182,184]: Methods for training body awareness and control in children with motor difficulties, combining circus training and interactive technology.Magic outFit[93,108,168]: Design of wearable technologies for sensorial changes of body perceptions to support physical activity.Sense2makeSense: Explorations in opening the design space of immersive and multisensory data representation.LearnSPORTtech[179–182,184,186]: Design of wearable technology to support sports and fitness practices through sensory feedback.Tangibles[38,39]: Co-design for upper limbs therapy for children with Cerebral Palsy employing interactive tangibles.DigiFys[11]: Research in children’s outdoor play and interactive installations to support it.Diverging Squash[98]: Single-player VR game inspired by racket ball.GIFT[196]: Museum experiences enriching physical exhibitions with digital content on smartphones.Online Course in Embodied Interaction[197]: Course in Embodied Design adapted to be taught online during the COVID-19 pandemic.

| Project | Code | Method |

|---|---|---|

ACHIEVE |

Ach1 |

Somaesthetic field Trips |

Ach2 |

Somaesthetic body scan and body mapping | |

Ach3 |

Generative bodystorming | |

Ach4 |

Role-playing and improvisation | |

Ach5 |

Online re-enactments | |

Ach6 |

Puppeteering | |

Ach7 |

Wizard of Oz + Informances | |

KOMPAN Workshop |

KOM1 |

What can I do with this? |

KOM2 |

Video sketching | |

KOM3 |

Play moods and quality cards | |

KOM4 |

Explore the movement aspect | |

KOM5 |

Action mock-up | |

KOM6 |

Play in context | |

Astaire |

Ast1 |

Warm-up games |

Ast2 |

Playing off-the-shelf VR and MR games | |

Ast3 |

Embodied exploration and bodystorming with the affordances of MR | |

Ast4 |

Embodied exploration and bodystorming with off-the-shelf VR games | |

Ast5 |

Embodied explorations to fine-tune the game | |

Super Trouper |

SuT1 |

Warming-up to introduce and sensitize participants to tech and exercises |

SuT2 |

Training sessions turning into participatory Embodied Sketching | |

SuT3 |

Bodystorming with experts | |

SuT4 |

Bodystorming with cards | |

Magic outFit |

MoF1 |

Dynamic body maps and keywords to characterise energising moments |

MoF2 |

Barriers to physical activity cards | |

MoF3 |

Somatic dress-up for movement and sensation awareness | |

MoF4 |

Brainstorming based enactment | |

Sense2makeSense |

S2M1 |

First-person sensorial exploration and materialization of data representations |

S2M2 |

Dolls to facilitate feeling and acting like your persona | |

S2M3 |

Body and sensory cards to inspire ideation | |

S2M4 |

Video prototype to capture design and scenario | |

LearnSPORTtech |

LSt1 |

Embodied explorations of technology use |

LSt2 |

Technology sensitization | |

LSt3 |

Sensory Bodystorming | |

Tangibles |

Tan1 |

Field studies and short ethnography |

Tan2 |

Interaction Relabelling applied in co-design | |

Tan3 |

Acting out movements | |

DigiFys |

DiF1 |

Long-term play engagement intervention in outdoor play |

DiF2 |

Short-term play engagement intervention in outdoor play | |

Diverging Squash |

DiS1 |

VR Bodystorming |

GIFT |

GIF1 |

Sensitising towards human practices |

Online Course in Embodied Interaction |

OEI1 |

Online Bodystorming |

The thematic analysis [25] was performed by the UC3M team

(EMS, LTV, OVH, ATJ

and me) and consisted of the following steps:

EMS,LTV,OVHand I labelled these methods according to salient features and characteristics.- The same four people, plus

ATJ, categorised the resulting characteristics using a bottom-up approach. EMSand I refined the categorisation and obtained meaningful subcategories.EMS,LTV,ATJand I grouped these categories and selected the group to elaborate on.- I asked the facilitators to comment on the categories and results and provide more illustrative details to articulate them.

I provide more details about the process below.

4.1.1 Characterisation

The labelling and characterisation process was performed by

EMS, LTV, OVH and me. We printed

the reports of methods on big sheets and arranged them on the floor of a

closed space. To characterise them, we used sticky notes, where we wrote

sentences or individual concepts that best described the methods. We

tagged them with their corresponding project and method names. This

approach aimed to gather insights bottom-up. Thus we did not come into

the process with preconceived categories or specific aspects to look out

for. We made sure that at least one embodied design expert covered each

technique, and also that every technique was characterised by at least

two people.

4.1.2 Categorisation

Once we had the sticky notes as working material, EMS,

LTV, OVH, ATJ and I gathered for

a big initial categorisation session lasting over 3 hours in a room of

approximately 50m². This happened collaboratively, on-site, and

preserving the bottom-up approach. We arranged the sticky notes in the

space, placing them randomly all over half of the room floor,

independent from other notes from the same technique or project. A small

and relatively cryptic code was used in the notes to later be able to

trace them back to their respective method and project. We proceeded to

simultaneously traverse the space reading and surveying the notes, and

looking for patterns and similarities between notes.

TODO(photo of the process?)

As this activity continued, we started to notice new categories. We grouped relevant notes in particular areas of the space and made the group aware of their existence—e.g. “There’s a group about Objects in this area!”—, to which the rest responded by bringing relevant notes they were aware of. During the process, these clusters would transform, grow, get divided into subcategories, or be integrated as subcategories of others. Interconnections with other categories were also drawn either through making use of proximity to indicate their closeness or through colour threads indicating relations between notes and categories. Finally, we documented the resulting map of categories with photos. We had a debrief session to talk about the experience and our insights during the process, concluding that some categories still needed revision and further connection with relevant others.

Next, EMS and I performed subsequent categorisation

sessions. We revised big, unfocused, or complex categories at the level

of notes, finding overarching categories and their relations to each

other, and also deepening and refining the findings from the first big

session. This allowed for an increased level of detail and led to

finding clusters within categories, merging clusters that were closely

related, naming and revising the names of clusters, and surfacing

interconnections. Further, subsequent sessions were needed to trace back

which methods and projects were involved in each category. In the end,

we found and chose 17 categories from the process.

4.2 Categories and Groups

In our analysis, we found 17 categories from the 41 movement-based design methods reported by the facilitators of 12 movement-based interaction design projects. We arranged them into five groups: Design Resources, Activities, Delivery, Framing, and Context (See Fig. 1). These categories and groupings are not orthogonal, meaning several of them can characterise a given method or project.

In this section, I introduce the groups and categories to provide a sense of their components. Note that the names of the categories are written in italics and the names of subcategories are written in bold.

4.2.1 Design Resources

This is the main emerging group of categories, on which I will focus on later in this chapter. It contains the categories of Movement, Space and Objects.

4.2.2 Activities

The Activities group contained the categories of Design Phase, Methods, Acting Out, Sensorial Explorations, and Crafting.

4.2.2.1 Design Phase

We found that movement-based methods were used across different Design Phases. They helped not only in Sensitizing and Inspiration but also in the Iteration and Evaluation stages of the design process. As such, they were adopted for the Divergent and Convergent phases of the design process. Additionally, some of the projects leveraged existing Technologies during these activities.

4.2.2.2 Methods

We categorized under Methods several references to already-existing design and research methods. Regarding Research, we found some references to field studies and ethnography. Concerning design, we found several references to classical Interaction Design techniques such as Brainstorming, Scenarios and personas, Participatory design, Wizard of Oz, and Puppeteering. Additionally, there were mentions of already existing Embodied Design and movement-based methods, especially the use of Embodied Sketching [108] and bodystorming [107,129,146,184]. We identified Warm-up techniques across projects as an important component of embodied methods.

4.2.2.3 Acting Out

Methods in the corpus used Acting Out to come up with, materialize, and iterate design ideas, or as part of a convergence process. It allowed participants to flesh out, experience and see key action sequences. Role-playing was used to iterate ideas in the following ways: by testing ideas within a particular situation and adjusting it iteratively; by tapping into human-like interactions, e.g. exploring different social roles; or by filtering and indicating improvements. It was also used to achieve joint sense-making as a group and to share ideas. Role-playing was mostly reported to be done in combination with improvisation.

4.2.2.4 Sensorial Explorations

We categorised under Sensorial Explorations notes regarding activities aimed towards increasing awareness of specific sensing modalities like vision, hearing or touch, either individually or in the form of multisensory feedback. They were used to inspire or iterate designs, and typically made use of bodystorming—particularly Sensory Bodystorming [184]—using physical probes with characteristic tactile and sound qualities.

4.2.2.5 Crafting

Crafting was adopted to create prototypes of interactive experiences, controllers and costumes while making use of readily available materials.

4.2.3 Delivery

The next group, Delivery, contained the categories of Facilitation, Planning and Logistics, and Documentation.

4.2.3.1 Facilitation

As part of the Facilitation category, we obtained the following list of Facilitation Tasks described and utilized across several projects:

- Arranging the meetings,

- Curation of materials—objects, references, words—to use and explore,

- Creation of a safe creative space,

- Communication of activities,

- Time tracking,

- Supporting the flow of ideas,

- Suggesting possibilities and alternatives,

- Encouragement of participation,

- Monitoring of energy and engagement levels,

- Balancing between playfulness and goal-oriented mindsets,

- Guiding and demonstration of actions,

- Guiding discussion and reflection,

- Lightweight documentation,

- Providing Safety measures.

Additionally, we found several mentions of having a predefined set of Instructions or rules for the facilitators or the participants to follow. These allowed a fluent development of the activities because they:

- Promoted a clear sequence of events,

- Allowed focusing on specific creative guidelines,

- Helped to coordinate when facilitators were part of the process, and

- Helped facilitators to feel more confident during the process.

Regarding the involvement of the facilitators, there was a variation in the required Facilitation Level that was reported for each method in the corpus. Noteworthy, methods that used digital technologies reported needing more time, energy and resources. Finally, we found some reflections that considered the context of the Participants of the design methods, either as a target audience or as designers in the project. The projects prioritized the accommodation of different participant backgrounds, abilities, needs and limitations. We found these considerations concerning physical movement and also the use of digital technologies. Methods in which Experts were participants, tended to emphasize co-creation with them. It was apparent how their skills and knowledge were leveraged, for example by providing detailed feedback, developing or introducing technologies, or guiding somatic and movement-based activities.

4.2.3.2 Planning and Logistics

An important complement to Facilitation was the category of Planning and Logistics. Regarding Planning and Logistics Tasks, we found and grouped considerations and reflections regarding the following:

- Activity preparation: selection of methods, scripting of the sequence of events for the sessions, requirements listing;

- Preparation of resources like props and cards, e.g. by designing, printing, obtaining, arranging, and carrying them;

- Management of space and time for the activities;

- Setup and use of digital technologies, experiences or assets;

- Preparation and deployment of documentation strategies and equipment; and

- Consideration and mitigation of safety and legal risks.

Methods varied in the Involvement Level they required for planning and logistics. A low involvement level occurred when there was a low requirement for resources, when these resources were easily available, when the facilitators or participants had high expertise, or when the activity had relatively low stakes. Conversely, methods that used complex technologies and setups like Virtual or Mixed Reality experiences, or methods that used several ad hoc elements such as custom-made cards or body maps, reported requiring considerable effort in planning and logistics.

4.2.3.3 Documentation

We found that the Documentation of activities was an important component in the Delivery of the movement-based design methods we analyzed. In this category, we grouped considerations regarding Data collection in general and the use of video and body maps in particular. Video recording was leveraged not only as a way to have an archive of evidence to evaluate after the activities but also as a creative medium for participants to prototype their ideas. Body Maps were adopted several times for participants to observe and communicate their body states, sensations or wearable prototypes across stages of the activities and design process.

4.2.4 Framing

The Framing group contained the categories of Play and Perspectives.

4.2.4.1 Play

Under the Play category, we grouped notes regarding playfulness, fun, and game design. Several projects had Playfulness either as a design goal or as a resource to instigate engagement and curiosity. Similarly, a few projects involved the concept of Fun as a goal or as a resource within their design methods. One way of fostering fun was to include pre-existing Movement-based games in the design activity. Finally, we found that some projects focused their movement-based methods on playing with and exploring, ideating and iterating key actions that were envisioned to be at the core of the designed activity. We found that these were related to core mechanics in Game Design and embodied core mechanics in playful activity-centric design [102,195].

4.2.4.2 Perspectives

The perspective participants would take in relation to the

target audience would emerge as an important consideration. We found

methods that worked from a First,

Second or Third-person perspective,

and even some that combined them [166].

This category also covered users’ perspectives, which were strongly

related to the target group of the design. Specifically,

Children were supported using several techniques with

technology (e.g. using perspectives in VR in ACHIEVE) and

without it. Finally, we found that Physical Models

allowed for first- and third-person perspective shifts.

4.2.5 Context

Some notes related very specifically to the Context of projects we studied, in their motivations and results. Starting Points and Outcomes were strongly tied to the given projects. The range of possible Goals for projects and the movement-based methods they used included the following: understanding; reflection; focus; embodied core mechanics; and changes in physical activity, behaviour or self-perception. Finally, some common Challenges faced during these methods and projects included social and ethical concerns, levels of expertise in relevant areas, the management of engagement during activities, and the use of VR together with all its technical requirements.

4.3 Design Resources in Detail